AI agents are no longer a novelty — enterprises are now running them in production. However, as enterprises scale their deployments, developers must make a foundational choice: a fully managed service for faster delivery, or a modular infrastructure for greater flexibility and control.

Created using editor

To address this, AWS introduced Agents for Amazon Bedrock in 2023 — a managed service that lets developers stand up AI agents quickly, without worrying about the underlying orchestration.

Amazon Bedrock Agents: The Managed Way

Amazon Bedrock Agents is a fully managed capability that is designed for developers who want to ship agents faster without managing the underlying orchestration logic. AWS handles the heavy lifting of the Reasoning-Act (ReAct) loop while you as a user just configure the behaviour.

Key Benefits:

- Fully Managed Orchestration: User supplies the instructions and the tools (via AWS Lambda) while Bedrock automatically determines the sequence of actions, handles prompt engineering, and manages the state.

- Rapid Deployment: Gets you to production quickly for well-defined tasks like “Check my Deliveroo status” or “Summarize the board meeting notes.”

- Integrated Knowledge Bases: Out-of-the-box integration with Bedrock Knowledge Bases that lets you plug in Retrieval-Augmented Generation (RAG) capabilities without any custom wiring.

However, as agent complexity grows — and with the rising popularity of open-source frameworks like Strands Agents and LangGraph — a fully managed approach starts to show its limits. Multi-agent collaboration, custom memory strategies, and framework integration demand finer control over how agents are built and run.

To address this, Amazon released Amazon Bedrock AgentCore in 2025, which is a suite of modular, serverless services for building production-grade agents.

Amazon Bedrock AgentCore: The Modular Way

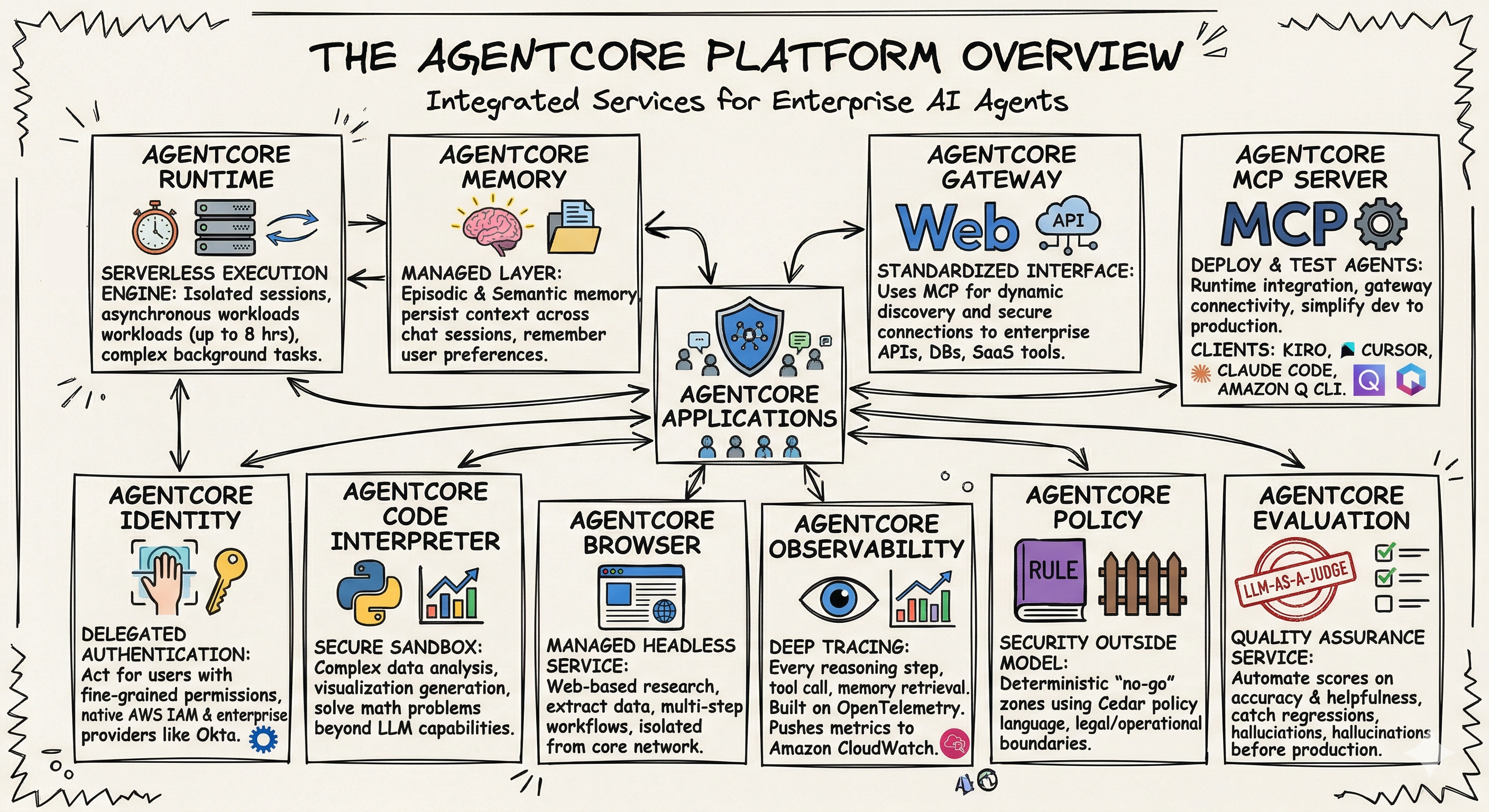

Unlike Bedrock Agents, AgentCore is framework-agnostic. Whether you are using LangGraph , CrewAI , or LlamaIndex etc., AgentCore provides the secure, scalable infrastructure to run them with the help of the service pillars.

The Service Pillars of AgentCore:

These pillars serve as the foundation for scaling AI agents from simple prototypes to secure, high-performance production systems.

Generated using Gemini

- AgentCore Runtime : A serverless runtime designed for the demands of agentic execution — secure session isolation, asynchronous processing, and support for workloads running up to 8 hours, without managing any underlying infrastructure.

- AgentCore Memory : More than just session context — AgentCore Memory provides episodic and semantic memory that spans multiple conversations, giving agents a persistent understanding of user preferences and past interactions.

- AgentCore Gateway : A standardized interface that utilizes the Model Context Protocol (MCP) to allow agents to dynamically discover and securely connect to enterprise APIs, databases, and third-party SaaS tools.

- AgentCore Identity : AgentCore Identity handles the authentication complexity that comes with agentic systems — letting agents act on behalf of specific users with scoped permissions, backed by native integration with AWS IAM and providers like Okta.

- AgentCore Code Interpreter : AgentCore Code Interpreter gives agents the ability to execute real Python code in a secure, isolated environment — enabling complex data analysis, visualisation, and mathematical reasoning that text generation alone can’t handle

- AgentCore Browser : A managed, headless browsing service for web-based research that allows agents to securely navigate websites, access data, and perform multi-step web workflows while keeping the execution isolated from your core network.

- AgentCore Observability : Built on OpenTelemetry, AgentCore Observability provides you end-to-end visibility into every reasoning step, tool call, and memory retrieval — with real-time metrics flowing directly into Amazon CloudWatch.

- AgentCore MCP Server : It helps you transform, deploy, and test AgentCore-compatible agents directly from your preferred development environment. With built-in support for runtime integration, gateway connectivity, and agent lifecycle management, the MCP server simplifies moving from local development to production deployment on AgentCore services. Supported MCP clients: Kiro, Cursor, Claude Code, Amazon Q CLI.

- AgentCore Policy : AgentCore Policy enforces guardrails outside the model itself, using the Cedar policy language to define hard boundaries. Regardless of what the prompt says, agents stay within your legal and operational limits — deterministically.

- AgentCore Evaluation : AgentCore Evaluation brings automated quality assurance to agent development. Using an LLM-as-a-Judge model, it continuously scores agent performance on accuracy and helpfulness, giving you an early warning system for regressions and hallucinations.

Differences In Features

| Feature | Bedrock Agents | Bedrock AgentCore |

|---|---|---|

| Primary Goal | Simplicity & Speed. Fully managed “no-code/low-code” orchestration. | Control & Scale. Infrastructure for custom agents and multi-agent systems. |

| Orchestration | Proprietary AWS orchestration (managed loop). | Bring your own (LangGraph, CrewAI, LlamaIndex, or custom Python). |

| Model Choice | Limited to models supported natively by Bedrock. | Model Agnostic. Any model on Bedrock or external models via API. |

| Execution | Orchestrated by Bedrock; you only provide Lambda “Action Groups.” | AgentCore Runtime. Serverless microVMs (Fargate-like) running your full agent code. |

| Memory | Managed session memory (standard context). | Advanced Memory. Persistent, episodic, and long-term memory across sessions. |

| Connectivity | Action Groups (Lambda) and Knowledge Bases. | AgentCore Gateway. Open-standard MCP (Model Context Protocol) and A2A support. |

| Security | Bedrock Guardrails and IAM. | Deep Isolation. Per-session microVM isolation and native Identity (OAuth/Cognito). |

| Complexity | Best for linear, task-oriented workflows. | Best for complex, long-running (up to 8h), and multi-agent systems. |

Pricing

- Amazon Bedrock offers flexible, pay-as-you-go pricing based on the tokens processed for text or images generated, with no upfront costs. Read more here.

- Amazon Bedrock AgentCore features a flexible, pay-as-you-go pricing model with no upfront costs or minimum requirements. Its suite of capabilities—including Runtime, Memory, and Code Interpreter—is fully modular, allowing you to use services independently and pay only for active usage. This structure supports a “start small, scale fast” strategy for agent development. More details here.

Get Started Today

Both services are available in the Amazon Bedrock Console. You can start by building a prototype with Bedrock Agents and, as your requirements evolve, migrate your logic to AgentCore for deeper customization.

- Amazon Bedrock AgentCore Documentation

- Explore Agent samples on GitHub

- Learn How to Build Production-ready AI agents

The Road Ahead…

The debate on choosing managed vs. modular AI agents won’t dissolve. As open-source frameworks mature and AgentCore adds higher-level abstractions, the gap in developer experience will narrow.

What’s likely to emerge is a layered adoption pattern: teams who are new to agents will begin with Bedrock Agents for fast time-to-value, then move to AgentCore as complexity grows. Platform teams will build internal agent infrastructure on AgentCore primitives and offering their own managed experience to developers.

The real shift isn’t technical but cultural. As agents evolve from simple assistants to autonomous systems running complex, long running tasks, the bar for reliability, security, and auditability rise sharply. That’s where modular services win — not for its simplicity, but for the transparency and control of underlying infrastructure which is not achievable with managed services.

The organisations that invest in understanding both layers today will be best positioned to build the next generation of autonomous systems in a faster and secured way.

Should you need help navigating your AI agent strategy on AWS, please reachout to us, we can help you build and scale with confidence.

We’re an AWS Generative AI Competency Partner with exclusive access to AWS co-investment opportunities for qualifying projects. Our AI Foundations solution extends existing landing zones with the governance and visibility needed to move AI projects from pilot to production. Let’s discuss your AI plans and explore available co-investment opportunities.

This blog is written exclusively by The Scale Factory team. We do not accept external contributions.